Graphs Counterfactual Explainability: A Comprehensive Landscape (AAAI 2024)

Tutorial at the Thirty-Eighth AAAI Conference on Artificial Intelligence (AAAI-24) on 20-21, Feb. 2024

Organisers

Mario A. Prado-Romero

Dr. Bardh Prenkaj

Prof. Giovanni Stilo

Slides can be downloaded HERE

Table of contents

- Abstract

- Duration

- Scope of the tutorial

- Prerequisites

- Outline & Contents

- Acknowledgement

- Meet the Speakers

- Bibliography

Highlights

The tutorial is based on ACM Computing Survey: A Survey on Graph Counterfactual Explanations: Definitions, Methods, Evaluation, and Research Challenges Use the following BibTeX to cite our paper.

@article{10.1145/3618105,

author = {Prado-Romero, Mario Alfonso and Prenkaj, Bardh and Stilo, Giovanni and Giannotti, Fosca},

title = {A Survey on Graph Counterfactual Explanations: Definitions, Methods, Evaluation, and Research Challenges},

year = {2023},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

issn = {0360-0300},

url = {https://doi.org/10.1145/3618105},

doi = {10.1145/3618105},

journal = {ACM Computing Surveys},

month = {sep}

}Abstract

Graph Neural Networks (GNNs) have proven highly effective in graph-related tasks, including Traffic Modeling, Learning Physical Simulations, Protein Modeling, and Large-scale Recommender Systems. The focus has shifted towards models that deliver accurate results and provide understandable and actionable insights into their predictions.

Counterfactual explanations have emerged as crucial tools to meet these challenges and empower users.

For the reasons mentioned above, in this tutorial, we furnish the essential theoretical underpinnings for generating counterfactual explanations for GNNs, arming the audience with the resources to gain a deeper understanding of the outcomes produced by their systems and enabling users to interpret predictions effectively.

First, we present an insight into GNNs, their underlying message-passing architecture, and the challenges of providing post-hoc explanations for their predictions across different domains.

Then, we provide a formal definition of Graph Counterfactual Explainability (GCE) and its potential to provide recourse to users.

Furthermore, we propose a taxonomy of existing GCE methods and give insights into every approach’s main idea, advantages, and limitations.

Finally, we introduce the most frequently used benchmarking datasets, evaluation metrics, and protocols to analyze the future challenges in the field.

Duration

The tutorial will be carried on for a quarter-day, spanning 1 hour 45 minutes, as the most suitable format to introduce attendees to counterfactual explanations on graphs and their foundational concepts. This duration strikes a balance between providing a comprehensive understanding of the topic without overwhelming participants with unnecessary technical details that may be less relevant to those with limited technical backgrounds. To complement this tutorial, we offer an additional lab session on the same topic to satisfy those interested in diving into the technical aspects of Graph Counterfactual Explanations.

Scope of the tutorial

The AAAI community has exhibited considerable interest in Graph Neural Networks and Explainable AI, as evidenced by the tutorials in previous editions. Graph Counterfactual Explainability (GCE) holds the potential to captivate a significantly broad audience of researchers and practitioners due to the pervasive nature of graphs and the inherent capacity of GCE methods to provide profound insights into intricate non-linear prediction systems. Based on the recently published survey [1], this tutorial will cover fundamental concepts related to Graph Neural Networks, Post-Hoc Explanation methods, and Counterfactual Explanations. Furthermore, the audience will be provided with a detailed classification of existing GCE methods, an in-depth analysis of their action mechanisms, a qualitative comparison, and a benchmark of their performance on synthetic and real datasets, including brain networks and molecular structures.

The tutorial is aimed at practitioners in academia and industry interested in applying machine learning techniques to analyze graph data. Participants with a background in data mining will gain an understanding of the information provided by explanation methods to end users. Those with machine learning (ML) expertise will delve deeper into state-of-the-art counterfactual explainers, critically analyzing their strengths and weaknesses.

Prerequisites

The tutorial is aimed at practitioners in academia and industry interested in applying machine learning techniques to analyze graph data.

Familiarity with basic ML concepts will be beneficial for thoroughly understanding the tutorial.

Outline & Contents

Counterfactual explanations shed light on the decision-making mechanisms and provide alternative scenarios that yield different outcomes, offering end users recourse—these distinctive characteristics position counterfactual explainability as a highly effective method in graph-based contexts. We adopt a multifaceted approach, organizing and examining GCE from different perspectives, such as method taxonomy, classification along various dimensions, detailed descriptions of individual works (including their evaluation processes), discussions on evaluation measures, and commonly used benchmark datasets. We provide a brief outline of the tutorial in Figure 1. Here, we comprehensively review counterfactual explainability in graphs and their importance with other (factual) explainability methods.

- Part I: Graphs fundamentals (20 mins)

- Wide-spread adoption of GNNs in graph prediction problems

- How do GNNs work?

- Applications of different types of GNNs

- Part II: eXplainable AI (30 mins)

- Issues of black-box models and the importance of interpretability

- What is a factual explanation?

- (Briefly) Revisiting GNNExplaner and GraphLIME

- Part III: Counterfactual Explainations in Graphs (55 mins)

- What is a graph counterfactual explanation (GCE)?

- GCE taxonomy description and method classification

- Model-level explainers

- Instance-level explainers

- Search-based

- Heuristic-based

- Learning-based

- Benchmarking datasets and evaluation metrics (pro et contra)

Figure 1: The brief outline of the tutorial with its time scheduling.

During the first part of the tutorial, we introduce GNNs [2] and their underlying message-passing mechanism to solve graph prediction problems. We will delve into the different types of GNNs and their application in real-world problems [3], such as protein-protein interaction, drug-target affinity prediction, and anomaly detection in social networks.

In the second part, we provide the reader with the challenges of deploying black-box models in critical scenarios and how post-hoc interpretability helps uncover “what is happening under the hood”. Here, we introduce factual explanations [4] and briefly revisit the most interesting methods in this category, namely GNNExplainer [5], and GraphLIME [6].

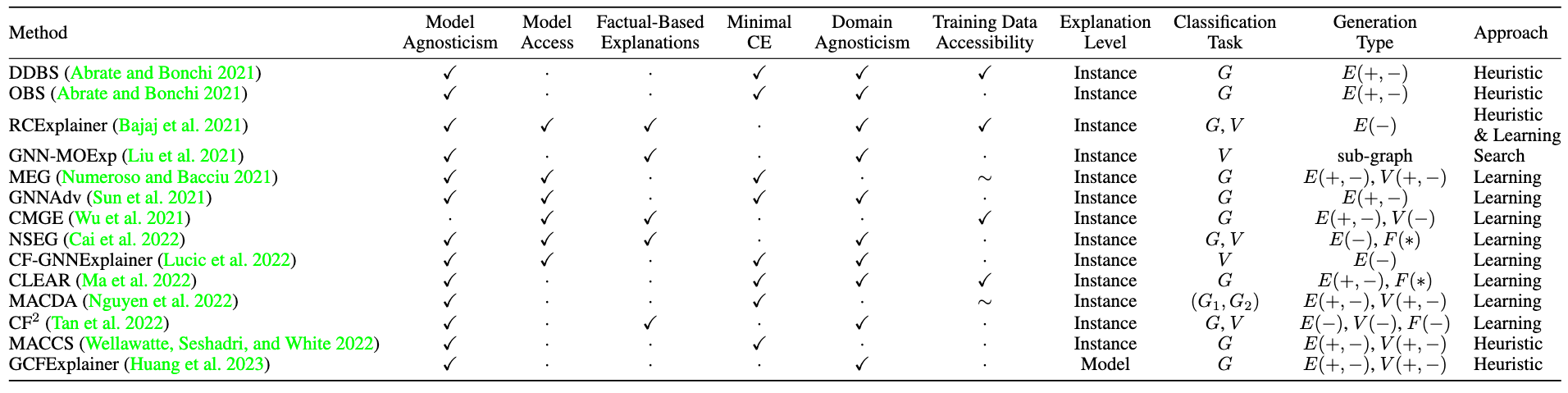

In the third part, we formalise the definition of Graph Counterfactual Explanation (GCE). We present a taxonomy of the most important references [1], categorised as in Table 1, including search-based explainers [7] , heuristic-based ones [8,9,10], learning-based ones [9,11,12,13,14,15,16,17,18], and global-level explanation methods [19]. Additionally, we list the most common benchmarking datasets and a set of evaluation metrics [4,20] necessary to assess the (dis)advantages of each counterfactual explainer in the literature. Furthermore, we submitted a lab session proposal that complements this tutorial by providing a hands-on experience.

Table 1: The most important references that will be covered in the tutorial.

Acknowledgement

“Graphs Counterfactual Explainability: A Comprehensive Landscape (Tutorial at AAAI 2024)” event was organised as part of the ICSC, Centro Nazionale di Ricerca in High Performance Computing, Big Data e Quantum Computing (Prot. CN00000013) initiatives aimed at disseminating to new communities the project results and creating bridging opportunities. ICSC, Centro Nazionale di Ricerca in High Performance Computing, Big Data e Quantum Computing receives funding from European Union – NextGenerationEU – National Recovery and Resilience Plan (Piano Nazionale di Ripresa e Resilienza, PNRR) – Project: CN00000013 – NATIONAL CENTRE FOR HPC, BIG DATA AND QUANTUM COMPUTING, Avviso pubblico D.D. n. 3138 del 16.12.2021, rettificato con D.D.3175 del 18.12.2021

Meet the Speakers

Mario Alfonso Prado-Romero

is a PhD fellow specialising in AI at the esteemed Gran Sasso Science Institute in Italy. His primary research focuses on the confluence of Explainable AI and Graph Neural Networks. Before this, he gained experience in relevant fields such as Anomaly Detection, Data Mining, and Information Retrieval. Notably, he is the key contributor to the GRETEL project, which offers a comprehensive framework for developing and assessing Graph Counterfactual Explanations. Additionally, he was selected by NEC Laboratories Europe as the only intern of the Human-Centric AI group in 2023, where his expertise in eXplainable Artificial Intelligence (XAI) will be applied to Graph Neural Networks for Biomedical Applications.

Bardh Prenkaj

obtained his M.Sc. and PhD in Computer Science from the Sapienza University of Rome in 2018 and 2022, respectively. He then worked as a senior researcher at the Department of Computer Science in Sapienza. He led a team of four junior researchers and six software engineers in devising novel anomaly detection strategies for social isolation disorders. Since October 2022, he has been a postdoc at Sapienza in eXplainable AI and Anomaly Detection. He is part of and actively collaborates with Italian and international research groups (RDS of TUM, PINlab of Sapienza, AIIM of UnivAQ, and DMML of GMU). He serves as a Program Committee (PC) member for conferences such as ICCV, CVPR, KDD, CIKM, and ECAI. He actively contributes as a reviewer for journals, including TKDE, TKDD, VLDB, TIST, and KAIS.

Giovanni Stilo

is a Computer Science and Data Science associate professor at the University of L’Aquila, where he leads the Master’s Degree in Data Science, and he is part of the Artificial Intelligence and Information Mining collective. He received his PhD in Computer Science in 2013, and in 2014, he was a visiting researcher at Yahoo! Labs in Barcelona. His research interests are related to machine learning, data mining, and artificial intelligence, with a special interest in (but not limited to) trustworthiness aspects such as Bias, Fairness, and Explainability. Specifically, he is the head of the GRETEL project devoted to empowering the research in the Graph Counterfactual Explainability field. He has co-organized a long series (2020-2023) of top-tier International events and Journal Special Issues focused on Bias and Fairness in Search and Recommendation. He serves on the editorial boards of IEEE, ACM, Springer, and Elsevier Journals such as TITS, TKDE, DMKD, AI, KAIS, and AIIM. He is responsible for New technologies for data collection, preparation, and analysis of the Territory Aperti project and coordinator of the activities on “Responsible Data Science and Training” of PNRR SoBigData.it project, and PI of the “FAIR-EDU: Promote FAIRness in EDUcation Institutions” project. During his academic growth, he devoted much of his attention to teaching and tutoring, where he worked on more than 30 different national and international theses (of B.Sc., M.Sc., and PhD levels). In more than ten years of academia, he provided university-level courses for ten different topics and grades in the scientific field of Computer and Data Science.

Bibliography

[1] Mario Alfonso Prado-Romero, Bardh Prenkaj, Giovanni Stilo, and Fosca Giannotti. A Survey on Graph Counterfactual Explanations: Definitions, Methods, Evaluation, and Research Challenges. ACM Comput. Surv. (September 2023). https://doi.org/10.1145/3618105

[2] F. Scarselli, M. Gori, A. C. Tsoi, M. Hagenbuchner and G. Monfardini, The Graph Neural Network Model, in IEEE Transactions on Neural Networks, vol. 20, no. 1, pp. 61-80, Jan. 2009, https://doi.org/10.1109/TNN.2008.2005605

[3] Z. Wu, S. Pan, F. Chen, G. Long, C. Zhang and P. S. Yu, A Comprehensive Survey on Graph Neural Networks, in IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 1, pp. 4-24, Jan. 2021, https://doi.org/10.1109/TNNLS.2020.2978386

[4] H. Yuan, H. Yu, S. Gui and S. Ji, Explainability in Graph Neural Networks: A Taxonomic Survey, in IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 45, no. 5, pp. 5782-5799, 1 May 2023, https://doi.org/10.1109/TPAMI.2022.3204236

[5] Ying, Z., Bourgeois, D., You, J., Zitnik, M., and Leskovec,J., 2019. Gnnexplainer: Generating explanations for graph neural networks. Advances in neural information processing systems, 32.

[6] Q. Huang, M. Yamada, Y. Tian, D. Singh and Y. Chang, GraphLIME: Local Interpretable Model Explanations for Graph Neural Networks, in IEEE Transactions on Knowledge and Data Engineering, vol. 35, no. 7, pp. 6968-6972, 1 July 2023, https://doi.org/10.1109/TKDE.2022.3187455

[7] Y. Liu, C. Chen, Y. Liu, X. Zhang and S. Xie, Multi-objective Explanations of GNN Predictions, 2021 IEEE International Conference on Data Mining (ICDM), Auckland, New Zealand, 2021, pp. 409-418, https://doi.org/10.1109/ICDM51629.2021.00052

[8] Carlo Abrate and Francesco Bonchi. 2021. Counterfactual Graphs for Explainable Classification of Brain Networks. In Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining (KDD ‘21). Association for Computing Machinery, New York, NY, USA, 2495–2504. https://doi.org/10.1145/3447548.3467154

[9] Bajaj, M., Chu, L., Xue, Z., Pei, J., Wang, L., Lam, P.,and Zhang, Y. Robust counterfactual explanations on graph neural networks. Advances in neural information processing systems, 34.

[10] Wellawatte GP, Seshadri A, White AD. Model agnostic generation of counterfactual explanations for molecules. Chem Sci. 2022 Feb 16;13(13):3697-3705. https://doi.org/10.1039/d1sc05259d

[11] Cai, R., Zhu, Y., Chen, X., Fang, Y., Wu, M., Qiao,J., and Hao, Z. 2022. On the Probability of Necessity and Sufficiency of Explaining Graph Neural Networks: A Lower Bound Optimization Approach. arXiv preprint arXiv:2212.07056

[12] Ana Lucic, Maartje A. Ter Hoeve, Gabriele Tolomei, Maarten De Rijke, Fabrizio Silvestri, CF-GNNExplainer: Counterfactual Explanations for Graph Neural Networks, in Proceedings of The 25th International Conference on Artificial Intelligence and Statistics, PMLR 151:4499-4511, 2022.

[13] Ma, J.; Guo, R.; Mishra, S.; Zhang, A.; and Li, J., 2022. CLEAR: Generative Counterfactual Explanations on Graphs. Advances in neural information processing systems, 35, 25895–25907.

[14] T. M. Nguyen, T. P. Quinn, T. Nguyen and T. Tran, Explaining Black Box Drug Target Prediction Through Model Agnostic Counterfactual Samples, in IEEE/ACM Transactions on Computational Biology and Bioinformatics, vol. 20, no. 2, pp. 1020-1029, 1 March-April 2023, https://doi.org/10.1109/TCBB.2022.3190266

[15] Numeroso, Danilo and Bacciu, Davide, MEG: Generating Molecular Counterfactual Explanations for Deep Graph Networks, 2021 International Joint Conference on Neural Networks (IJCNN). https://doi.org/10.1109/IJCNN52387.2021.9534266

[16] Sun, Y., Valente, A., Liu, S., & Wang, D. (2021). Preserve, Promote, or Attack? GNN Explanation via Topology Perturbation. ArXiv. https://arxiv.org/abs/2103.13944

[17] Juntao Tan, Shijie Geng, Zuohui Fu, Yingqiang Ge, Shuyuan Xu, Yunqi Li, and Yongfeng Zhang. 2022. Learning and Evaluating Graph Neural Network Explanations based on Counterfactual and Factual Reasoning. In Proceedings of the ACM Web Conference 2022 (WWW ‘22).https://doi.org/10.1145/3485447.3511948

[18] Wu, H.; Chen, W.; Xu, S.; and Xu, B. 2021. Counterfactual Supporting Facts Extraction for Explainable Medical Record Based Diagnosis with Graph Network. In Proc. of the 2021 Conf. of the North American Chapter of the Assoc. for Comp. Linguistics: Human Lang. Techs., 1942–1955. https://aclanthology.org/2021.naacl-main.156/

[19] Zexi Huang, Mert Kosan, Sourav Medya, Sayan Ranu, and Ambuj Singh. 2023. Global Counterfactual Explainer for Graph Neural Networks. In Proceedings of the Sixteenth ACM International Conference on Web Search and Data Mining (WSDM ‘23). Association for Computing Machinery, New York, NY, USA, 141–149. https://doi.org/10.1145/3539597.3570376

[20] Guidotti, R. Counterfactual explanations and how to find them: literature review and benchmarking. Data Min Knowl Disc (2022). https://doi.org/10.1007/s10618-022-00831-6